Coaxial vs optical vs HDMI: which is the best audio connection to use?

So many sockets, but which is superior?

While wireless audio is likely our future, cables will almost certainly remain part of our audio and home cinema set-ups for years to come. So it pays to know a thing or two about the different types available to you. You’ve seen the sockets, you probably own at least one audio cable of each kind, but which digital audio connection should you use? What are the pros and cons of each, and which gives you the best AV performance? Allow us to present a brief overview.

If you’ve ever owned a TV, DVD/Blu-ray player, set-top box, soundbar or AV receiver, chances are you’ll have come across either a coaxial, optical or, more recently, an HDMI connection. Those of you with a full-blown surround sound system almost certainly will have... unless an installer has up until this point done it all for you!

All three connections are digital, of course. Coaxial and optical can only transmit audio data, while HDMI brings the added bonus of supporting both audio and video. If you aren't quite sure which connection to take advantage of, we hope this page helps...

Coaxial digital connection

Probably the least common connection when it comes to modern AV kit, coaxial digital uses electricity to transmit audio.

The connector is a standard, circular RCA connector - the kind that’s found at either end of a pair of analogue audio cables (or 'interconnects').

But don’t be tempted to try and use a standard RCA phono cable in place of a dedicated coaxial digital cable. They look similar and can work, but an analogue interconnect has different impedance values from a digital one (50 ohm versus 75 ohm), so won’t work as well. An entry-level cable like the QED Performance Coaxial will do a fine job for most.

Coaxial might not be as widespread as its rival optical connection these days, but you'll still find it at the back of certain AV receivers, integrated amplifiers and TVs.

The latest hi-fi, home cinema and tech news, reviews, buying advice and deals, direct to your inbox.

And, in our experience, compared to optical, a coaxial connection does tend to sound better. That's because it has greater bandwidth available, meaning it can support higher quality audio up to 24-bit/192kHz. Optical is usually restricted to 96kHz.

The main downside to a coaxial digital connection is the potential transfer of electrical noise between your kit. Noise is bad news when it comes to sound quality, but it exists in all AV components to one degree or another. Unfortunately, using a coaxial connection enables noise to travel along the cable from the source to your amplifier.

Also, coaxial doesn't have the bandwidth required to support high-quality surround sound formats such as Dolby TrueHD, DTS-HD Master Audio, Dolby Atmos and DTS:X. So, in a modern home cinema setting, its uses are quite limited.

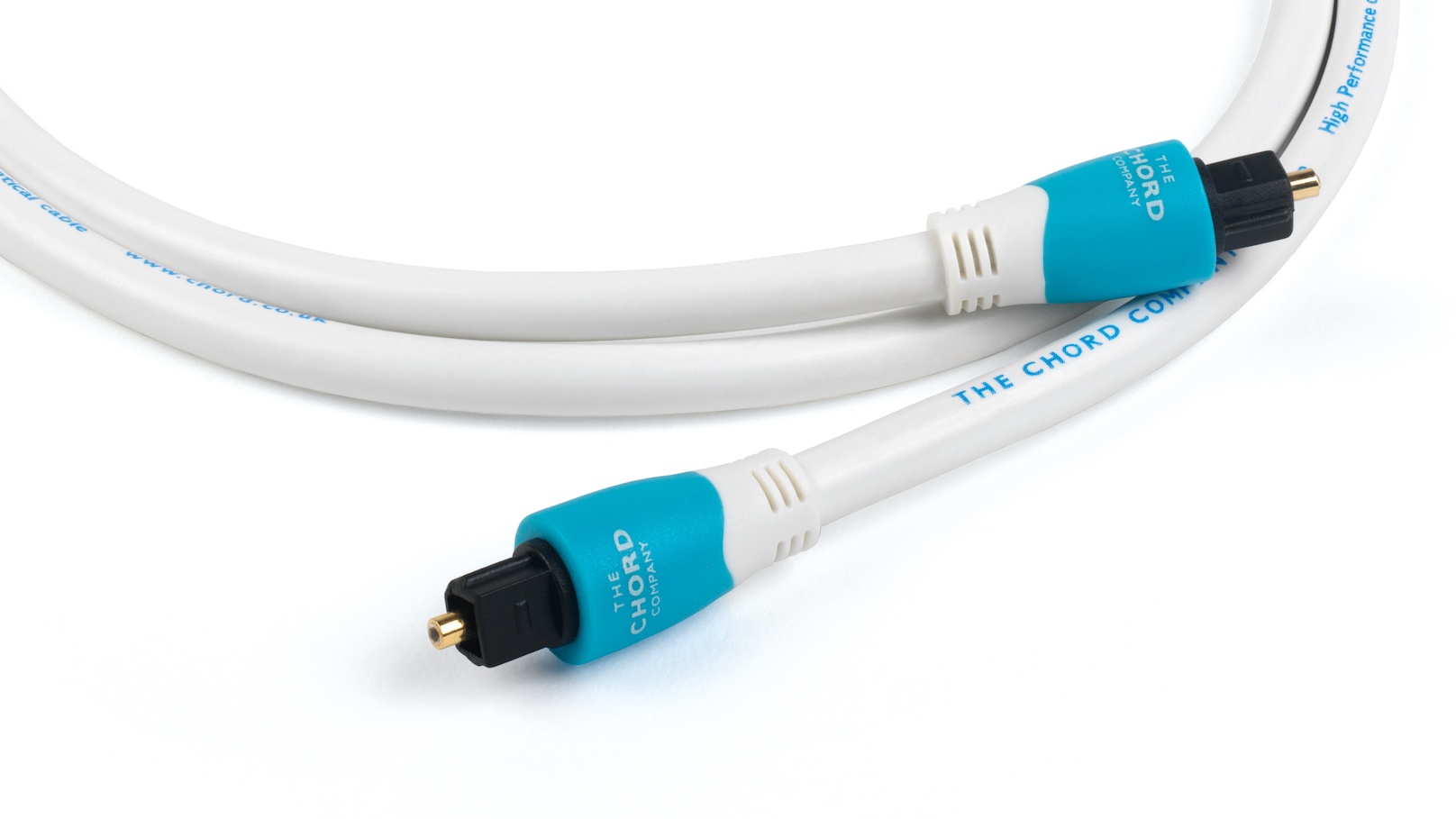

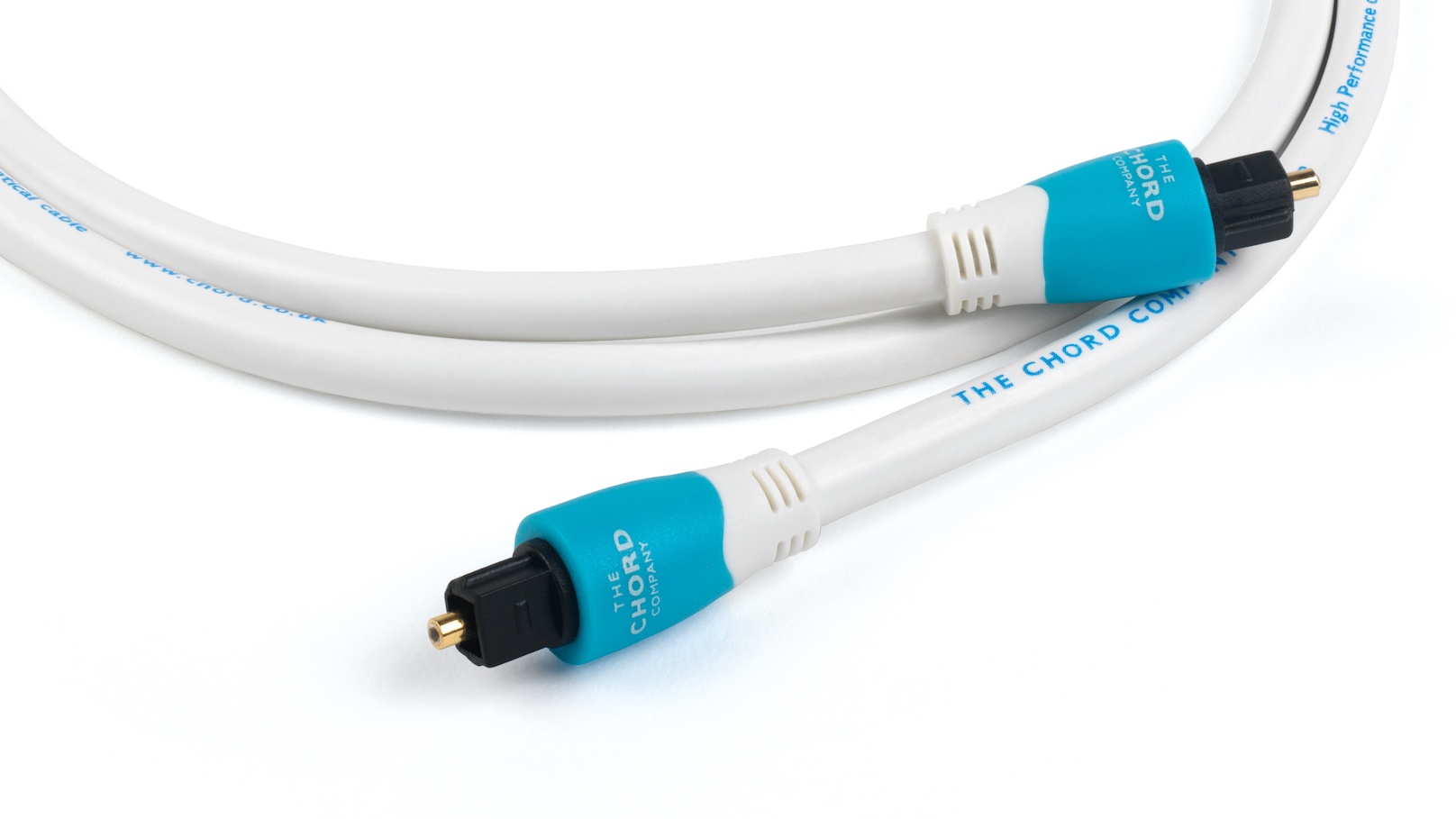

Optical digital connection

An optical digital connection uses the medium of light to transmit data through a cable’s optical fibres (which can be made from plastic, glass or silica). An optical cable doesn’t allow noise to pass from source to DAC circuitry like a coaxial can, and so makes sense to use this socket when connecting straight into the DAC of a soundbar or AV receiver.

Traditionally, in a home cinema environment, optical connections tend to be used to transmit compressed Dolby Digital and DTS surround sound. Optical cables with a Toslink (Toshiba Link) connector slot into a matching socket on both source and receiver. Something like the QED Performance Graphite Optical is a good entry-level option.

Although HDMI has taken over as the main socket of choice for many manufacturers, optical outputs are still common on game consoles, Blu-ray players, set-top boxes and televisions. Optical inputs are found at the amplification or DAC end, e.g. on soundbars and AV receivers.

Like coaxial, one of the issues with optical is that it doesn’t have enough bandwidth for the lossless audio formats such as Dolby TrueHD or DTS-HD Master Audio soundtracks found on most Blu-rays and 4K Blu-rays. An optical connection also can’t support more than two channels of uncompressed PCM audio. Then there's the threat of damage if an optical cable is bent too tightly.

What about HDMI?

Launched in 2002, HDMI is a one-size-fits-all connection for video and audio. It boasts much higher bandwidth than optical, allowing for playback of lossless audio formats such as Dolby TrueHD and DTS-HD Master Audio. Unlike optical and coaxial, there isn’t really a similar rival out there.

You'll find HDMI inputs and outputs a firm fixture on the best TVs, Blu-ray players, AV receivers and, increasingly, soundbars. An entry-level cable like the AudioQuest Pearl HDMI will suit a wide range of systems.

HDMI is a constantly evolving standard too, with new and improved versions offering more bandwidth and greater capacity to carry more channels of audio, such as Dolby Atmos and DTS:X soundtracks. It also supports new and current video formats – including Ultra HD 4K resolution and the various HDR formats – as well as additional features such as high frame rate (HFR), and eARC (which can deliver up to 32 channels of audio).

The majority of TV and AV products launched over the last five years support HDMI version 2.0, but HDMI 2.1 (which supports 8K resolution content and next-gen gaming features such as ALLM) is becoming more common among modern TVs and AV kit. If you have a TV model from a big manufacturer from the last two or three years, it should sport at least one HDMI 2.1 socket.

So, which connection should you use?

The answer to this will depend on the kit you’re using. If it’s a straight choice between coaxial and optical, we’d go for the former. In our experience, a coaxial connection tends to produce better audio quality than optical, allowing for a higher level of detail and greater dynamics.

But, we live in an age where convenience is king. HDMI is now the go-to connection for all things AV and it’s hard to argue against it if all the kit in your system chain sports that socket.

HDMI’s feature set, upgradability and the fact it can handle both audio and video means you don’t need to worry about too many wires clogging up your system. And, best of all, you won't sacrifice performance.

MORE:

The best surround sound systems we've tested

And the best TV deals on the market

Dolby Atmos FlexConnect vs DTS Play-Fi Immersive Home Theater: a wireless surround sound showdown

Andy is Deputy Editor of What Hi-Fi? and a consumer electronics journalist with nearly 20 years of experience writing news, reviews and features. Over the years he's also contributed to a number of other outlets, including The Sunday Times, the BBC, Stuff, and BA High Life Magazine. Premium wireless earbuds are his passion but he's also keen on car tech and in-car audio systems and can often be found cruising the countryside testing the latest set-ups. In his spare time Andy is a keen golfer and gamer.